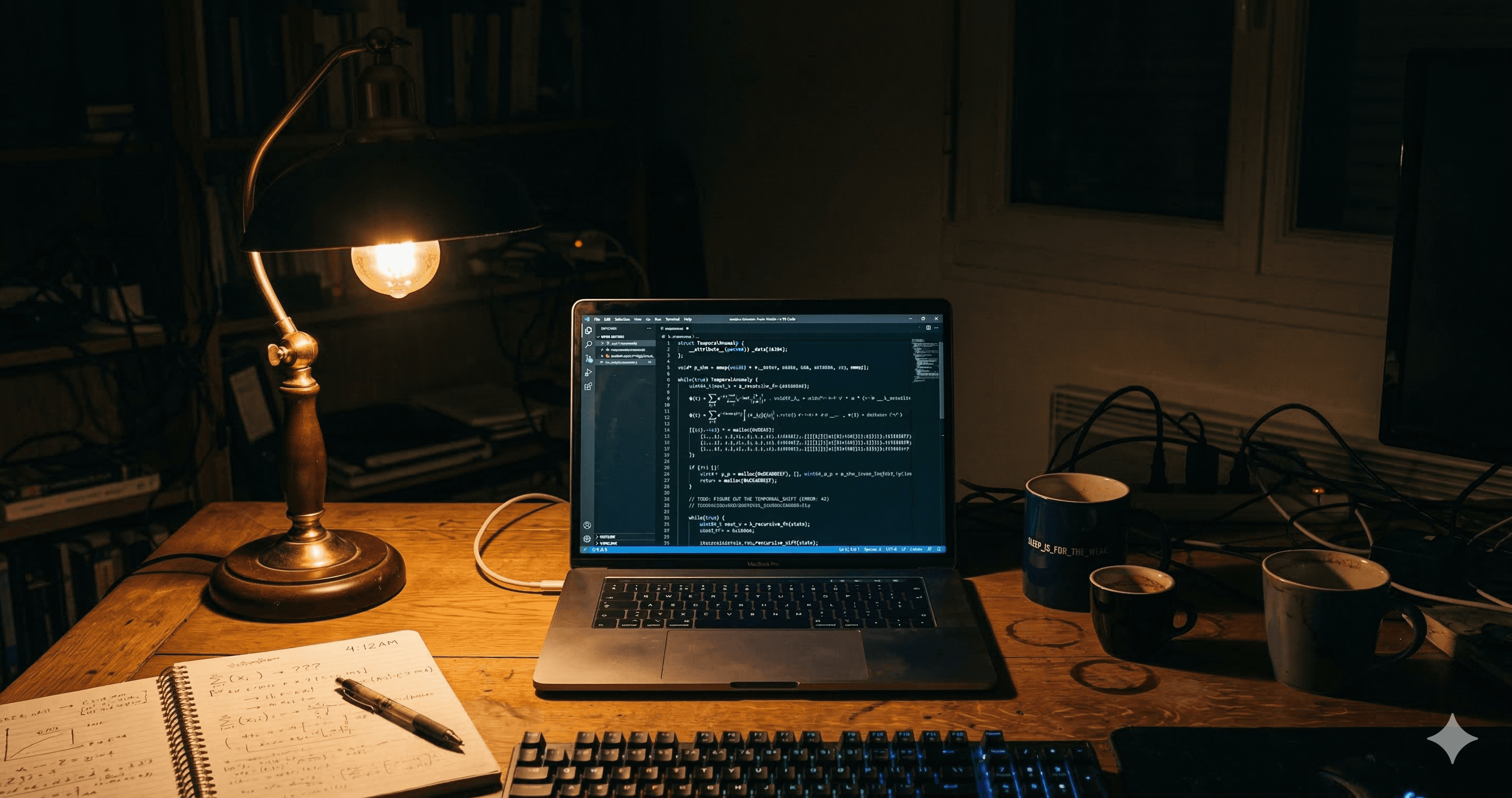

OpenAI WebSocket Mode for Responses API: How to Use It and Why It's a Game-Changer for AI Agents (2026)

OpenAI just launched WebSocket mode for the Responses API — a persistent connection that cuts latency by up to 40% in agentic workflows. Learn how it works, how to integrate it in voice agents, and the top use cases you need to know.

OpenAI has officially launched WebSocket mode for its Responses API (wss://api.openai.com/v1/responses) — a persistent, low-latency connection designed specifically for long-running agentic workflows. If you're building AI agents that loop through dozens of tool calls, this is the most impactful infrastructure update OpenAI has shipped in recent months.

What Is WebSocket Mode for the Responses API?

Unlike the traditional HTTP REST approach where every turn opens a brand-new connection, WebSocket mode lets your agent maintain a single persistent connection to /v1/responses across the entire workflow.

Each new turn sends only incremental inputs (new user messages or tool outputs) along with a previous_response_id reference — no need to resend full conversation history. This is made possible by a connection-local in-memory cache that the server keeps for your most recent response on that socket.

Key distinction: This is different from the existing OpenAI Realtime API (

wss://api.openai.com/v1/realtime), which handles speech-to-speech audio. The new WebSocket mode is for the text/chat Responses API, aimed at orchestration, agentic coding, and tool-heavy pipelines.

Why This Matters: Performance Gains

The old pattern — HTTP polling with full context resent each turn — adds significant overhead in agents that call many tools. WebSocket mode directly fixes this.

Workflow Type | HTTP REST Pattern | WebSocket Mode |

|---|---|---|

Single turn Q&A | Fine | Fine |

5–10 tool call loop | Moderate overhead | Faster |

20+ tool call chain | High overhead, slow | Up to ~40% faster |

ZDR / | Works | Fully compatible |

Parallel runs | N/A | Multiple connections needed |

The in-memory cache is the key. Instead of re-hydrating context from disk on every turn, the server reuses connection-local state for continuation — making each round-trip meaningfully faster in long agent loops.

How to Connect: Step-by-Step

Step 1 — Open the Connection

Install the websocket-client library if using Python (pip install websocket-client), then connect with your API key:

from websocket import create_connection

import json, os

ws = create_connection(

"wss://api.openai.com/v1/responses",

header=[

f"Authorization: Bearer {os.environ['OPENAI_API_KEY']}",

],

)

Step 2 — Send Your First response.create

Fire the first turn with the full system prompt, tools, and the user's initial message:

ws.send(json.dumps({

"type": "response.create",

"model": "gpt-5.2",

"store": False,

"input": [

{

"type": "message",

"role": "user",

"content": [{"type": "input_text", "text": "Find the fizz_buzz() function in my codebase."}]

}

],

"tools": [

# your tool definitions here

]

}))

Step 3 — Continue Turns Incrementally

For every follow-up turn, only send new inputs + chain from the previous response ID. Never resend full conversation history.

ws.send(json.dumps({

"type": "response.create",

"model": "gpt-5.2",

"store": False,

"previous_response_id": "resp_abc123",

"input": [

{

"type": "function_call_output",

"call_id": "call_xyz",

"output": "{ 'result': 'function found at line 42' }",

},

{

"type": "message",

"role": "user",

"content": [{"type": "input_text", "text": "Now optimize it for performance."}],

}

],

"tools": []

}))

Step 4 — Warm Up for Faster First-Turn Response

Pre-warm the connection with generate: false to load context into cache before the user speaks:

ws.send(json.dumps({

"type": "response.create",

"model": "gpt-5.2",

"store": False,

"generate": False,

"input": [

{"type": "message", "role": "system",

"content": [{"type": "input_text", "text": "You are a helpful booking assistant."}]}

],

"tools": []

}))

Integrating With Voice Agents

The WebSocket Responses API is the orchestration brain of your voice agent pipeline. Here's the full architecture:

User speaks

↓

[STT — Whisper / Deepgram]

↓ (transcript text)

[Responses API WebSocket] ← persistent connection

↓ (text + tool calls)

[Tool Execution Layer] (calendar, CRM, search, etc.)

↓ (tool result)

[Responses API WebSocket] ← incremental continuation

↓ (final text response)

[TTS — OpenAI TTS / ElevenLabs]

↓

User hears response

Why not just use the Realtime API for everything? The Realtime API (/v1/realtime) is best for native speech-to-speech without intermediate text. But if you need custom tool execution logic, text processing middleware, or store=false ZDR compliance, the Responses API WebSocket + STT + TTS pattern gives you far more control.

Key Use Cases

1. Agentic Coding Assistants

An AI coding agent that runs read_file → analyze → edit → run_tests → fix → run_tests in a loop is exactly what this is built for. With 20+ tool call chains being up to 40% faster, coding agents like Cursor-style tools benefit enormously.

2. Voice-Based Customer Support Bots

Phone bots (built with Twilio, Plivo, or Exotel) can now use the Responses API WebSocket as the brain — keeping one persistent connection open per call session, handling CRM lookups, booking confirmations, and escalation logic through tool calls, all over a single socket.

3. Real-Time Orchestration Pipelines

Multi-agent orchestration systems — where a supervisor agent delegates tasks to sub-agents — benefit from incremental input continuation. Each delegation round trip doesn't re-upload the full context.

4. Long-Running Research Agents

An agent that browses the web, reads documents, calls search APIs, and synthesizes answers can now run a full 30–50 step pipeline without latency overhead accumulating at every turn.

5. AI Tutors and Learning Bots

Educational platforms running multi-turn Socratic dialogue with code execution and adaptive questioning can maintain session state on one persistent connection per student, with clean ZDR compliance for student data privacy.

How It Improves Existing Agents

No repeated context uploads — only new items are sent per turn, not the full thread

Connection-local cache — the server reuses in-memory state instead of loading from disk on each turn

ZDR-compatible — works with

store=false, so no conversation data is persisted to OpenAI serversWarmup support — pre-load tools and instructions before the user's first message to eliminate cold-start latency

Sequential safety — runs are executed one at a time on a connection, preventing race conditions

Connection Limits and Error Handling

Max 60 minutes per WebSocket connection — implement a reconnect handler that resumes from the last

response_idNo multiplexing — if you need parallel agent runs, open separate connections

previous_response_not_found— returned when the cached ID is missing; handle by sending full context again or using/responses/compactfirst

def reconnect_and_continue(last_response_id, full_context):

ws = create_connection(

"wss://api.openai.com/v1/responses",

header=[f"Authorization: Bearer {os.environ['OPENAI_API_KEY']}"]

)

ws.send(json.dumps({

"type": "response.create",

"model": "gpt-5.2",

"store": True,

"previous_response_id": last_response_id,

"input": full_context,

"tools": []

}))

return ws

/responses/compact — Your Context Window Safety Net

For very long agent runs that approach context limits, use /responses/compact to compress history, then start a fresh chain:

compacted = client.responses.compact(model="gpt-5.2", input=long_input_array)

ws.send(json.dumps({

"type": "response.create",

"model": "gpt-5.2",

"store": False,

"previous_response_id": None,

"input": [

*compacted.output,

{"type": "message", "role": "user",

"content": [{"type": "input_text", "text": "Continue from here."}]}

],

"tools": []

}))

Quick Reference: Which Transport to Use

Scenario | Best Transport |

|---|---|

Browser voice app (mic input) | WebRTC ( |

Server-to-server speech-to-speech | WebSocket Realtime API |

Server agent with many tool calls | WebSocket Responses API (new) |

Simple single-turn chat | HTTP REST |

Long agentic coding / research runs | WebSocket Responses API (new) |

OpenAI's new WebSocket mode for the Responses API marks a clear architectural shift — from stateless HTTP calls to stateful, session-aware agent connections. For any developer building production AI agents in 2026, this is the right transport layer to adopt now.