How to Do SEO for LLMs: Making Your Website Visible to AI Models

Learn practical strategies for LLM SEO in 2025. Discover how to use llms.txt, llms-full.txt, and structured content to make your website visible and r

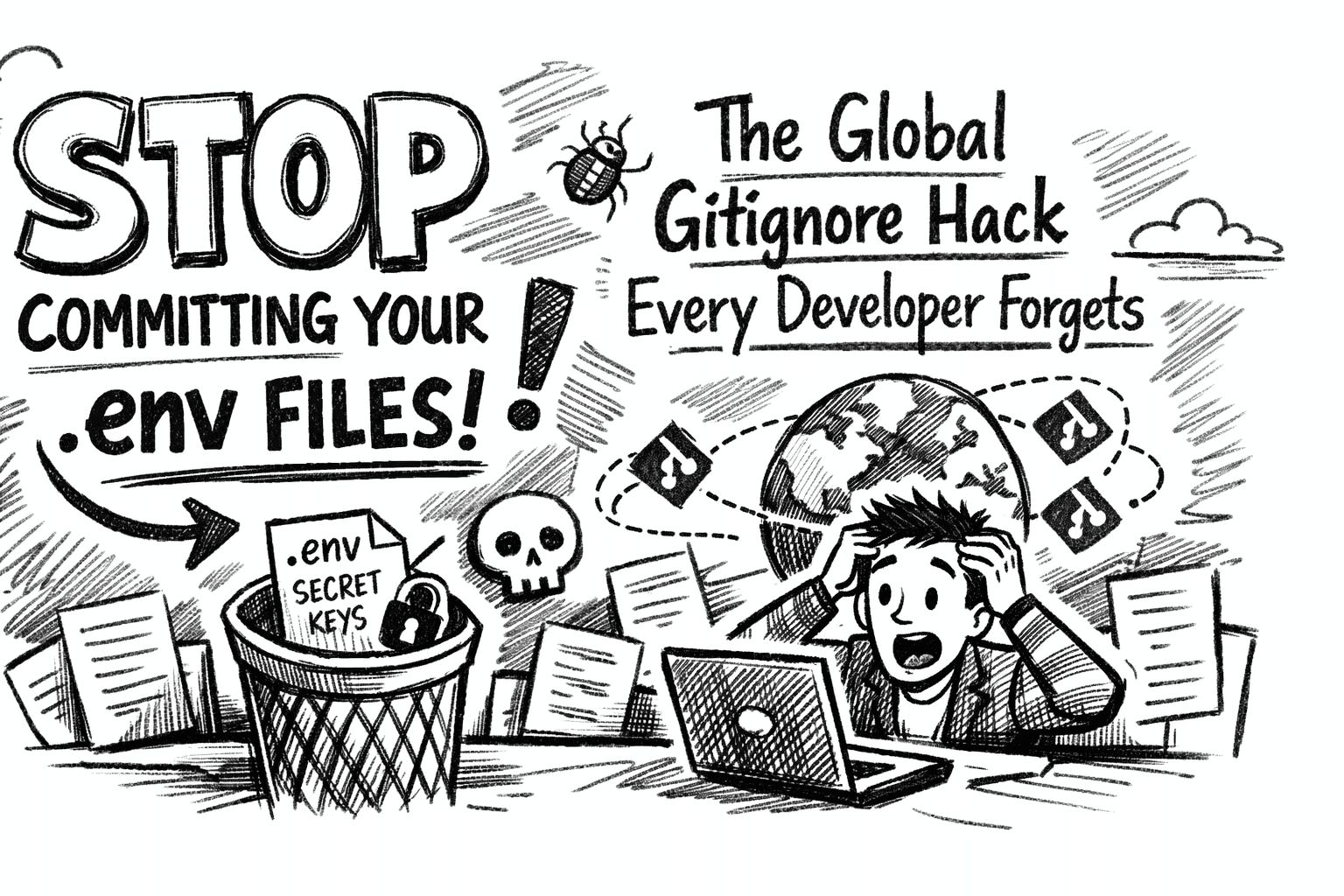

So, Is SEO Dead? Again? A Developer's Look at "LLM SEO"

For the last two decades, "SEO" meant one thing: appeasing the great and powerful Google. You'd obsess over keywords, build backlinks, tweak your H1 tags, and pray to appear on the first page. That game isn't gone, but let's be honest, the ground is shaking.

Why? Because millions of people, myself included, are skipping Google for a growing number of queries. We're asking ChatGPT, Perplexity, Claude, or Gemini to just give us the answer. And those answers don't just link out—they synthesize, summarize, and quote information directly, often citing their sources.

If your site is invisible to these models, or if your content is a jumbled mess they can't understand, you risk disappearing from this new wave of discovery.

So, what's the deal with "LLM SEO"? Is it just another pile of marketing hype, or is it something you actually need to worry about? As a developer, I've been digging into this, and the answer is... well, it's complicated. But yes, it's real. Here's a no-fluff guide to what actually matters.

What Is LLM SEO (And How Is It Different)?

LLM SEO is about optimizing your site to be read, understood, and used by large language models. The key difference is in the intent of the machine.

Google (Traditional Search): Crawls the web to build a massive index. Its job is to rank links based on hundreds of signals (relevance, authority, backlinks, user experience, etc.).

LLMs (Answer Engines): Ingest massive datasets (including web crawls) to build a knowledge model. Their job is to synthesize an answer from multiple sources.

When you ask an LLM a question, it's not just matching keywords. It's trying to find factual, well-explained snippets of text from its training data to construct a new paragraph. If your site isn't structured for easy parsing, you're not going to be one of those sources. It's that simple.

This isn't magic. It’s about removing friction.

The New "Standard" That Isn't a Standard: llms.txt

You've probably seen robots.txt (which tells bots where not to go) and sitemap.xml (which gives them a map of everything). Now, there's a new convention floating around: llms.txt.

Let's be perfectly clear: This is not an official standard. There's no W3C stamp of approval. It's an experiment, a proposed convention that some AI companies (like OpenAI) have acknowledged and said they're "considering."

The idea is to give AI crawlers a curated guide, separate from your sitemap.

llms.txt(The Curated Index): This is the short, "executive summary" for an AI. You're supposed to put your most important pages here. Think of it like a sticky note you're leaving for the bot: "Hey, if you only read 10 pages, read these." It's best written in Markdown for human readability.Example (

/llms.txt):# MySite: A Curated Index for AI > We are a platform connecting developers with projects. ## Core Mission - [About Us](https://mysite.com/about) — Our mission and team. - [How it Works](https://mysite.com/how) — A guide for developers. ## Key Content - [Blog](https://mysite.com/blog) — Our best articles on AI and career dev. - [Project Showcase](https://mysite.com/projects) — Featured projects. ## Notes - Please crawl politely. - For a full list of all pages, see our sitemap.xml.llms-full.txt(The Bulk Data-Dump): This is the more exhaustive version. Ifllms.txtis the curated list, this is the "drink from the firehose" list. It's where you might dump all your blog post URLs, all your public profile pages, etc.Honestly, this one feels more like a temporary hack until crawlers get better at parsing sitemaps, but it can't hurt.

The Boring Stuff That Still Matters More Than Anything

Before you run off and create an llms.txt file, let's talk about the 90% of the work that actually matters. None of the new stuff works if your site is fundamentally broken.

LLMs and Google crawlers are eating from the same trough. If your site is a mess for Google, it's a mess for everyone.

This means you still have to do the "old" SEO:

A Valid

robots.txt: Make sure you're notDisallow-ing bots from your key content.A Clean

sitemap.xml: Keep it updated. This is still the primary map most crawlers will use.Clean HTML & Structure: This is so important. Use your

<h1>,<h2>,<h3>tags properly. Write in clear paragraphs (<p>). Use lists (<ul>,<ol>). A well-structured HTML document is trivial for a machine to parse. A "div soup" of styling-first HTML is not.Structured Data (schema.org): This is your secret weapon. It's literally a cheat sheet for machines. By adding a JSON-LD script to your page, you're not hoping the AI figures out what the content is—you're telling it, explicitly.

For example, on a blog post, you should have Article schema. This tells the bot the headline, the author, the publish date, and the image, no guessing required.

Example (JSON-LD for an Article):

HTML

<script type="application/ld+json">

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "A Developer's Grumpy Guide to LLM SEO",

"author": {

"@type": "Person",

"name": "Aman Raj"

},

"datePublished": "2025-10-25",

"description": "A no-fluff guide to what LLM SEO is, whether it's hype, and what you actually need to do."

}

</script>

This is probably more important than llms.txt right now.

Don't Get Scraped to Death: The "Oh Crap" Moment

Okay, here's the double-edged sword. Inviting these new bots (like ChatGPT-User, PerplexityBot, etc.) is great for visibility. But some of them can be aggressive.

If you have a site where every page (like a user profile) hits your database for a live query, you are setting yourself up for a very bad, very expensive day. A popular AI model deciding to index your 100,000 user profiles could easily look like a DDoS attack to your servers and your wallet.

Best Practices:

Cache Everything: Those

llms.txtfiles? Don't generate them on the fly. Make them static files that get regenerated on a schedule.Static Content is King: Serve as much of your site as static, pre-rendered HTML as possible. Let the bots consume that instead of hammering your dynamic endpoints and APIs.

Rate Limit: Be aggressive with rate-limiting bots at your web server (Nginx, Caddy) or CDN level.

robots.txtis Not Security: A final reminder:robots.txtis a suggestion. It's a "please don't enter" sign on an unlocked door. Malicious bots will ignore it. If a page contains truly private data, it should be behind an authentication wall, period.

How to Write for Robots (Without Sounding Like One)

This is the part that makes writers cringe, but it's crucial. LLMs are looking for clear, factual, explanatory text. They want to quote things.

Answer Questions Directly: Structure content around "What is," "How to," and "Why."

Use Plain English: The more your content sounds like a textbook, a "For Dummies" guide, or a good Wikipedia entry, the better.

Avoid Fluff: AI models are getting very good at ignoring generic, "sales-y" marketing copy. "Unlock your potential with our synergistic solution..." is just noise. "Our tool solves [problem] by doing [X, Y, and Z]" is data.

This is Not Keyword Stuffing: In fact, stuffing keywords will probably get your content flagged as low-quality. LLMs understand semantic context. They don't need you to repeat "LLM SEO Guide" 15 times. They need you to explain what it is, contextually.

So... How Do I Know If It's Working?

This is the annoying part: you don't, really. Not yet.

There's no "Google Search Console for LLMs" (though one is probably being built). Right now, it's a bit of a black box. The best you can do is:

Check Your Logs:

grepyour server logs for bot User-Agents likePerplexityBot,ChatGPT-User, orAnthropic-ai. Are they hitting your site? Are they visiting yourllms.txtfile?Monitor Citations: Go to an AI tool like Perplexity and ask it a question you know your site answers well. Does your site show up as a source?

Stay Updated: This whole space is moving stupidly fast. What works today might be obsolete in six months.

Final Thoughts

Look, let's zoom out. LLM SEO isn't voodoo. It's not some radical reinvention of the wheel. It's just the next logical step of "technical SEO."

You're just making your site's content as easy to digest as possible for a new, very literal, and very powerful type of reader.

The llms.txt thing is an experiment. The real work, the stuff that will pay off for both Google and the AIs, is in the "boring" stuff: clean HTML, structured schema.org data, and clear, authoritative writing. Get that right, and you're 90% of the way there.

A quick note from the author...

Writing this stuff down helps me organize my own thoughts as a developer. I’m Aman, a freelance full-stack dev, and my day job is to build, scale, and optimize the very kinds of websites we've been talking about.

This intersection of code, content, and new tech is what I love. If you're building a project and need someone who thinks about the full picture—from the database to the UI and now, apparently, to the AI crawlers—you can find my work and get in touch at amanraj.me.